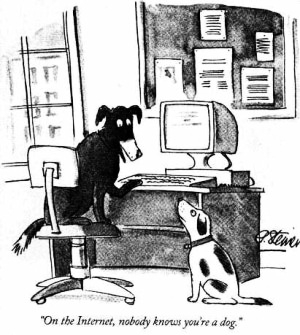

"On the internet, nobody knows you’re a dog."

So says the dog sitting at a computer in Peter Steiner’s 1993 New Yorker cartoon. The cartoon captured a radical change in the nature of human interactions that was just beginning in 1993, a change both exhilarating for its possibilities, and terrifying for the same reason.

Over the past quarter century, we’ve all learned the dog's lesson. A random stranger on the internet could be anybody, anywhere. A worldly impresario on a music forum could be kid in his mom’s basement. A fourteen year old girl in a chatroom could be an undercover cop. The international business consultant who just sent you a link request on LinkedIn could be a spy. The African oil heiress in your inbox is undoubtably a scam artist.

But while we’ve learned to distrust user names and text more generally, pictures are different. You can't synthesize a picture out of nothing, we assume; a picture had to be of someone. Sure a scammer could appropriate someone else’s picture, but doing so is a risky strategy in a world with google reverse search and so forth. So we tend to trust pictures. A business profile with a picture obviously belongs to someone. A match on a dating site may turn out to be 10 pounds heavier or 10 years older than when a picture was taken, but if there’s a picture, the person obviously exists.

No longer. New adverserial machine learning algorithms allow people to rapidly generate synthetic 'photographs' of people who have never existed. Already faces of this sort are being used in espionage.

Computers are good, but your visual processing systems are even better. If you know what to look for, you can spot these fakes at a single glance — at least for the time being. The hardware and software used to generate them will continue to improve, and it may be only a few years until humans fall behind in the arms race between forgery and detection.

Our aim is to make you aware of the ease with which digital identities can be faked, and to help you spot these fakes at a single glance.

Jevin West and Carl Bergstrom

Seattle, WA.